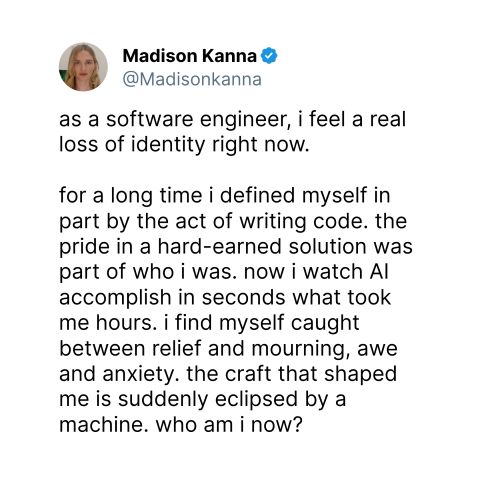

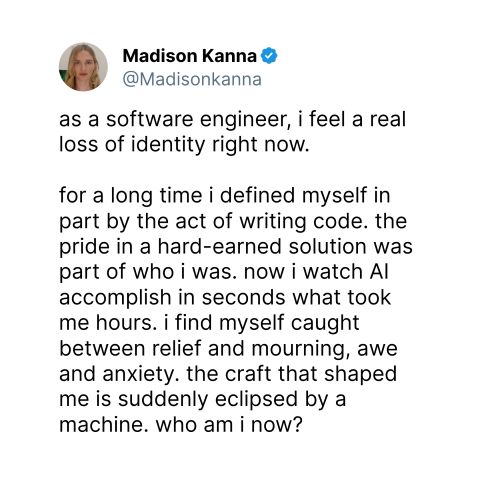

As software engineers, our identity was never "the person who can write code" - it was "the person who can solve problems with software." For 50+ years, writing code has been the unavoidable tax we p…

LinkedIn Content Strategy & Writing Style

Director, Google Cloud AI. Best-selling Author. Speaker. AI, DX, UX. I want to see you win.

1 person tracking this creator on Viral Brain

Addy Osmani positions himself as a pragmatic bridge between high-level AI strategy and ground-level engineering craft, leveraging his role at Google to provide a front-row seat to the future of software development. His content strategy centers on operationalizing AI for developers, moving beyond hype to focus on specific tools like the Model Context Protocol (MCP), agentic workflows, and the evolving taxonomy of UI components. What makes him notable is his refusal to sacrifice engineering discipline for automation; he consistently advocates for maintaining human judgment and "taste" while aggressively adopting AI to eliminate friction. This creates a unique intersection of enterprise product leadership and technical mentorship, where he simultaneously launches global Google Cloud features while publishing deeply practical O'Reilly guides on how individual contributors can remain "effective" in an increasingly abstracted world.

251.6K

6.7K

942

—

7.9

39

5

As software engineers, our identity was never "the person who can write code" - it was "the person who can solve problems with software." For 50+ years, writing code has been the unavoidable tax we p…

An engineer at Anthropic wrote a spec, pointed Claude at an Asana board, and went home. Claude did the rest. Claude broke the spec into tickets, spawned agents for each one, and they started building…

Announcing big changes to Gemini in Chrome! Agentic browsing arrives. Today marks a massive shift in how we experience the web. Starting today, Gemini in Google Chrome is evolving from an assistant i…

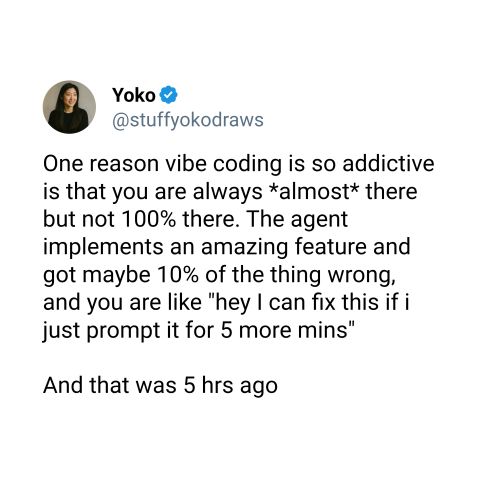

The real engineering skill isn't getting AI to 100% - it's recognizing when you've hit the point of diminishing returns at 90%. That's your cue to take the handoff. You're not "giving up" by reading…

Announcing Web Quality Skills: let AI agents write higher-quality code! 🚀 I'm excited to share Web Quality Skills - a comprehensive collection of Agent Skills designed to teach your AI coding assist…

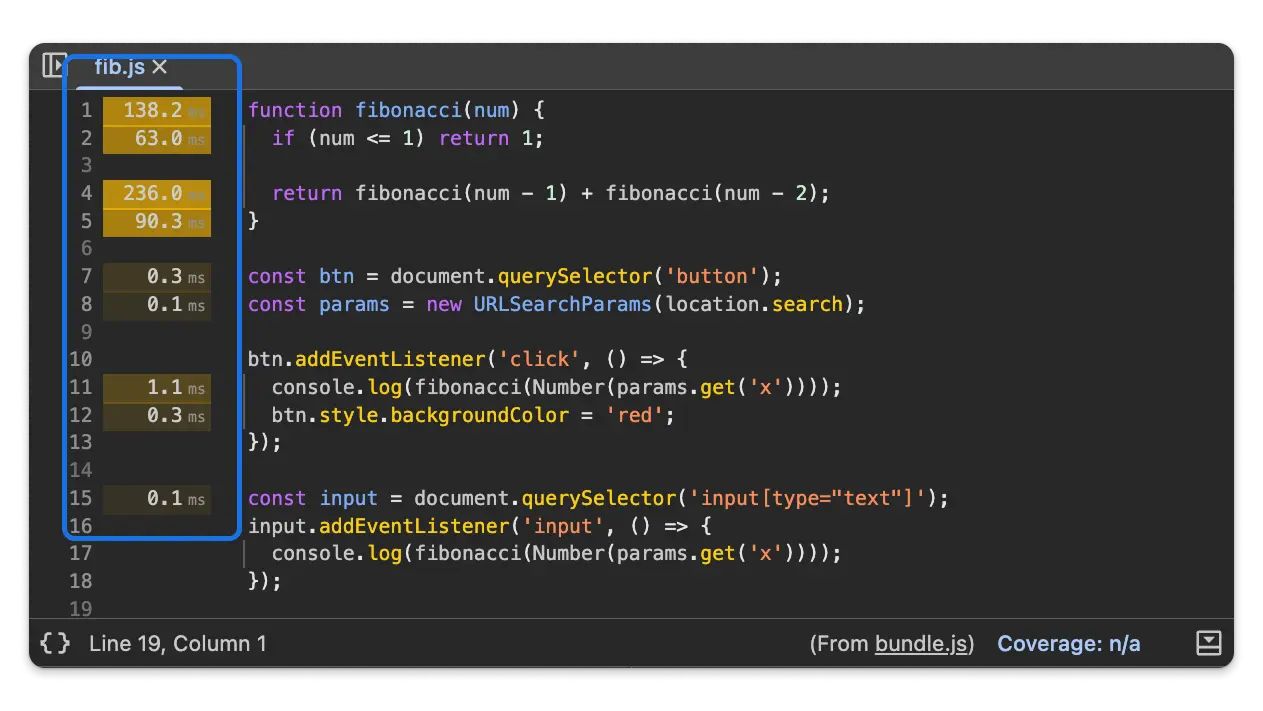

Tip: Chrome DevTools lets you see line-level performance! and now it's even more precise! We should all want our users to have a fast, great experience. If you use Chrome DevTools, you may be familia…

7.9 posts/week

Posts / Week

1 days

Days Between Posts

5

Total Posts Analyzed

HIGH

Posting Frequency

942%

Avg Engagement Rate

STABLE

Performance Trend

260

Avg Length (Words)

HIGH

Depth Level

ADVANCED

Expertise Level

0.86/10

Uniqueness Score

YES

Question Usage

0.15%

Response Rate

Writing style breakdown

<start of post>

The "Context Window" is the new "Memory Management" - and most developers are still leaking tokens.

My latest deep-dive on architectural efficiency: https://lnkd.in/gX9zR2p ✍

In the early days of C, you had to be obsessed with every byte. If you didn't manage your pointers, your app crashed. Today, we have garbage collection for code, but we don't have it for context. We are shoving massive, unoptimized prompts into LLMs and wondering why the "reasoning" feels sluggish or the costs are skyrocketing.

We are entering the era of Context Engineering.

The quality of an agent's output is inversely proportional to the noise in its prompt. When you provide a 100k token context window filled with irrelevant Slack logs and outdated documentation, you aren't giving the model "more to work with." You are diluting the signal.

🎯 Dynamic Pruning: Only injecting the specific module definitions relevant to the current ticket.

🧠 Semantic Routing: Using a smaller model to decide which "knowledge chunks" the larger model actually needs to see.

⚡ State Compression: Summarizing previous agent turns rather than re-sending the entire history.

The mental model for 2026? You aren't just a prompt engineer. You are a context architect. You are building the filters that ensure the AI only sees the "truth" of the codebase, not the "noise" of the repository.

I've been experimenting with this on our internal tools at Google, and the results are clear: smaller, high-precision contexts outperform "infinite" windows every single time. Verification becomes easier, and the "hallucination" rate drops to near zero.

How is your team handling context bloat? Are you still using "select all," or are you building smarter retrieval layers?

#ai #programming #softwareengineering

<end of post>

Sign in to unlock the full writing analysis

Nail your LinkedIn strategy with ViralBrain.

Analyze and write in Addy Osmani's style. Grow your LinkedIn to the next level.