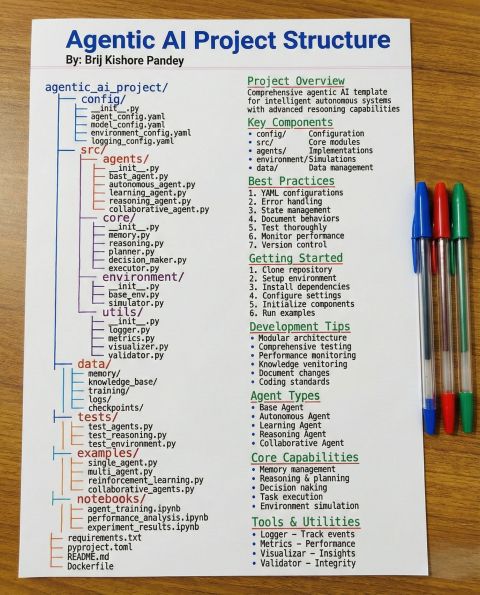

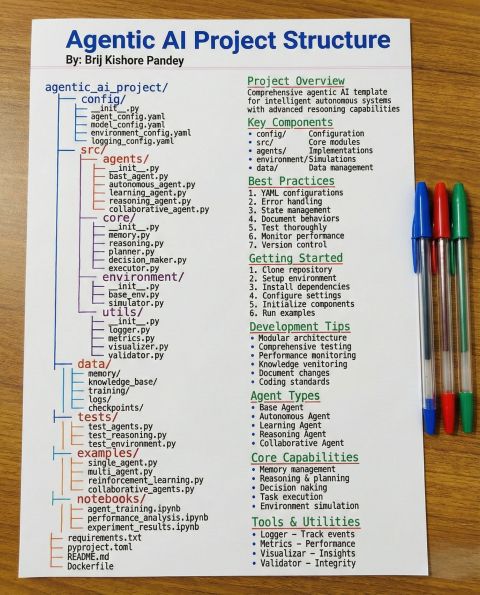

Stop structuring your Agentic AI projects like standard GenAI apps. When we were building simple RAG apps in 2023, a utils folder and app. py were enough. But Agentic AI requires a fundamental shi…

LinkedIn Content Strategy & Writing Style

AI Architect | AI Engineer | Generative AI | Agentic AI | Tech, Data & AI Content Creator | 1M+ followers

2 people tracking this creator on Viral Brain

Brij kishore Pandey positions himself as a high-level AI Architect and systems educator who bridges the gap between experimental GenAI and production-ready enterprise systems. His content strategy centers on the transition from simple chatbots to complex Agentic AI architectures, prioritizing structural integrity, governance, and engineering fundamentals over superficial tool-tracking. He distinguishes himself by moving beyond the "hype cycle" to offer granular, technical roadmaps that treat AI as a rigorous engineering discipline rather than a collection of prompts. By intersecting technical leadership with career mentorship, he provides unique value through highly visual framework breakdowns and "2026-ready" skill paths that emphasize reliability, cost-efficiency, and operational independence.

703.4K

29.9K

543

—

14.2

8

1

Stop structuring your Agentic AI projects like standard GenAI apps. When we were building simple RAG apps in 2023, a utils folder and app. py were enough. But Agentic AI requires a fundamental shi…

How to become an AI Engineer in 2026 (without getting lost) Everyone wants to become an AI Engineer in 2026. But most people are learning the wrong way. They spend months: -watching tutorials -coll…

Real Agentic AI is a layered system — and each layer solves a different class of problems (accuracy, safety, autonomy, compliance, operations). Here’s a clean way to visualize it 👇 Agentic AI Layer…

Most conversations about customer support AI are still stuck at the surface level. Chatbots. Scripts. Deflection metrics. That’s not where the real change is happening. The real shift is toward AI th…

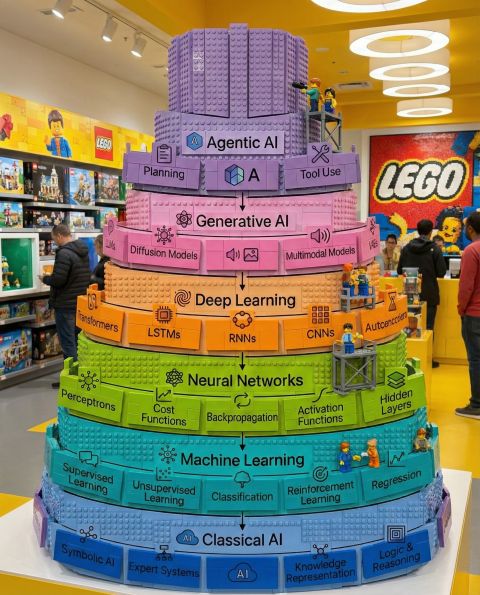

If you look at the evolution of AI, it looks a lot like a set of building blocks. For years, we focused on the foundation: the math, the logic, and the pattern recognition. But looking at this stack…

14.2 posts/week

Posts / Week

0.6 days

Days Between Posts

1

Total Posts Analyzed

HIGH

Posting Frequency

542.5%

Avg Engagement Rate

STABLE

Performance Trend

320

Avg Length (Words)

HIGH

Depth Level

ADVANCED

Expertise Level

0.83/10

Uniqueness Score

NO

Question Usage

0%

Response Rate

Writing style breakdown

<start of post>

Agentic AI isn’t “LLM + a few tools”.

That combo can be impressive in a demo.

But in production, it breaks the first time the world gets messy.

you’re treating autonomy like a feature.

It’s not.

Autonomy is infrastructure.

And infrastructure has layers.

Here’s a practical blueprint for turning an agent from “it works on my laptop” into something you can actually ship.

Layer 1: Core reasoning (LLM)

Yes, you need a strong model.

But the real question is: can it stay consistent under constraint?

Layer 2: Grounding (retrieval + context)

If your agent is allowed to “wing it”, it will.

Ground it with retrieval, scoped context, and clear boundaries.

Layer 3: Memory (state that survives sessions)

Most agents fail here.

Not because memory is hard to store.

Because memory is hard to manage.

What gets saved?

What gets summarized?

What gets deleted?

What gets replayed at the right moment?

Layer 4: Planning (decide, then do)

Stop thinking “one prompt”.

generate a plan

validate the plan

execute the plan

re-plan when reality disagrees

Layer 5: Tool use (APIs, workflows, external systems)

Tool calls are not the win.

Reliable tool calls are the win.

retries (with backoff)

timeouts

idempotency

input validation

structured outputs

graceful degradation when one tool fails

Layer 6: Safety (policy, redaction, permissions)

This is not optional.

If the agent can take action, it must be constrained by policy.

PII handling, authorization, and redaction aren’t “later”.

Layer 7: Observability (trace what happened)

If you can’t answer “why did it do that?”

you don’t have an agent.

you have a mystery box.

Log the plan.

Log the tool calls.

Log the retrieved context.

Log the final decision.

Then evaluate it.

Layer 8: Evaluation (measure quality like an engineer)

The biggest failure mode is not that the model is “dumb”.

It’s that the team can’t measure.

accuracy (did it solve the task?)

safety (did it violate policy?)

robustness (does it survive edge cases?)

cost (tokens, tool usage, latency)

regressions (what got worse after changes?)

Layer 9: Governance (approval, audit, change control)

Enterprise-grade agents need traceability.

Who approved the workflow?

What data was accessed?

Which policy was applied?

What changed between versions?

If you skip layers, you don’t move faster.

You just postpone failure.

If you’re building agents this year, pick one layer you’re currently ignoring and fix it.

Which one is it?

memory, and the discipline to keep it clean.

<end of post>

Sign in to unlock the full writing analysis

Nail your LinkedIn strategy with ViralBrain.

Analyze and write in Brij kishore Pandey's style. Grow your LinkedIn to the next level.