Extracting text from 90+ file formats. You tried PyMuPDF for PDFs, Tesseract for OCR, Tika for the rest ... Kreuzberg replaces that stack. Content-hash caching drops repeat extractions to sub-10ms. Qu…

LinkedIn Content Strategy & Writing Style

Agents, Graphs, Ontologies

1 person tracking this creator on Viral Brain

André Lindenberg positions himself as a high-level architect at the intersection of agentic engineering and knowledge representation, moving far beyond surface-level AI hype to focus on the structural "plumbing" of intelligent systems. His content strategy centers on the rigorous optimization of developer workflows, specifically through token efficiency, local LLM orchestration, and the integration of formal ontologies into generative models. What makes him notable is his ability to bridge the gap between abstract semantic modeling and pragmatic open-source implementation, often spotlighting specialized tools that solve the "unsexy" but critical problems of memory management and data extraction. By blending deep technical tutorials on agent memory with philosophical insights into ontological prisms, he provides a unique value proposition for engineers who need their AI agents to be both cost-effective and logically consistent.

43.1K

23.6K

219

—

55.8

51

1

Extracting text from 90+ file formats. You tried PyMuPDF for PDFs, Tesseract for OCR, Tika for the rest ... Kreuzberg replaces that stack. Content-hash caching drops repeat extractions to sub-10ms. Qu…

Install codeburn, then run codeburn optimize. That command scans your coding agent sessions for specific waste ... repeated file reads, low read-to-edit ratios, uncapped bash output, unused MCP server…

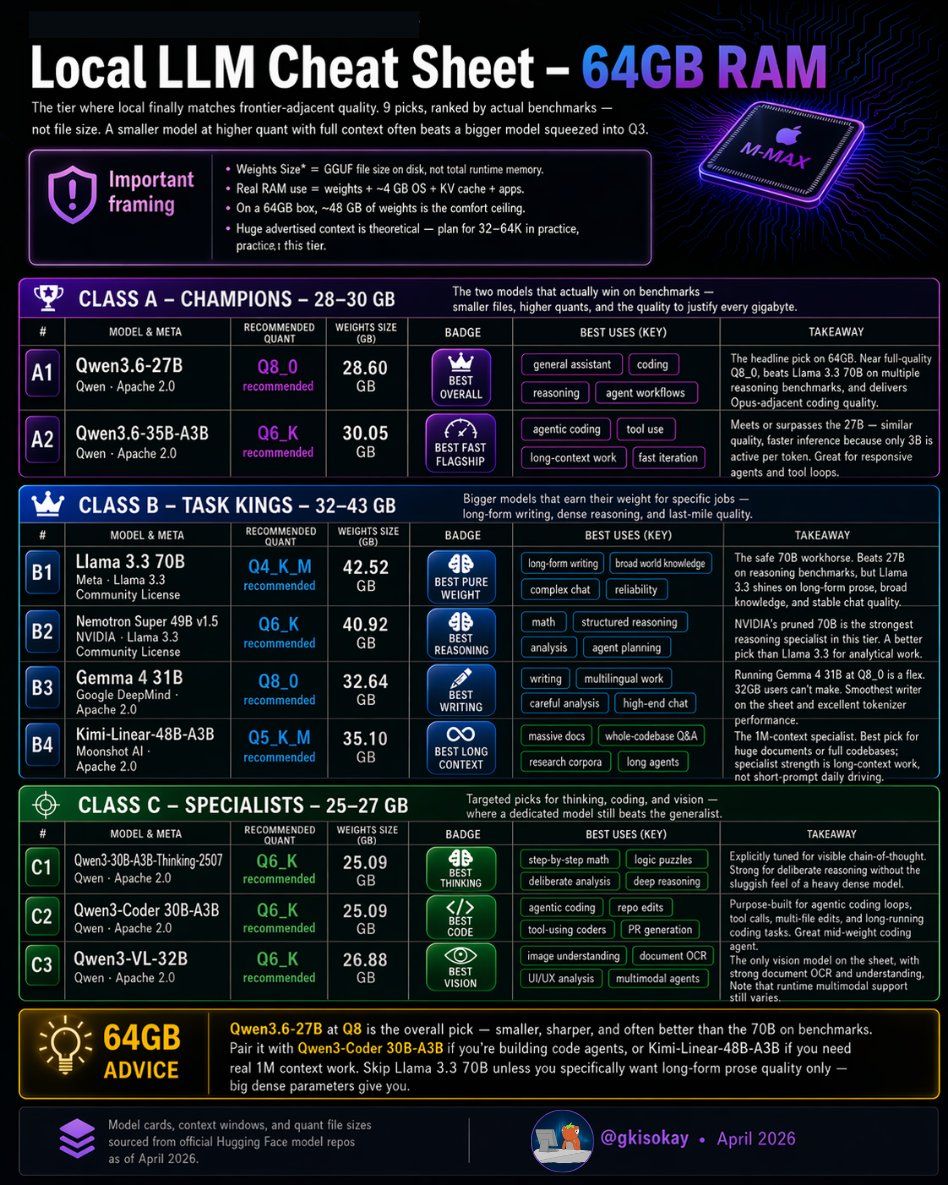

Five of nine models on this 64GB local LLM cheat sheet are Qwen ... dense 27B flagship at Q8_0, MoE 35B-A3B for speed, dedicated coding, vision, and thinking variants at Q6_K. The rest fills specific…

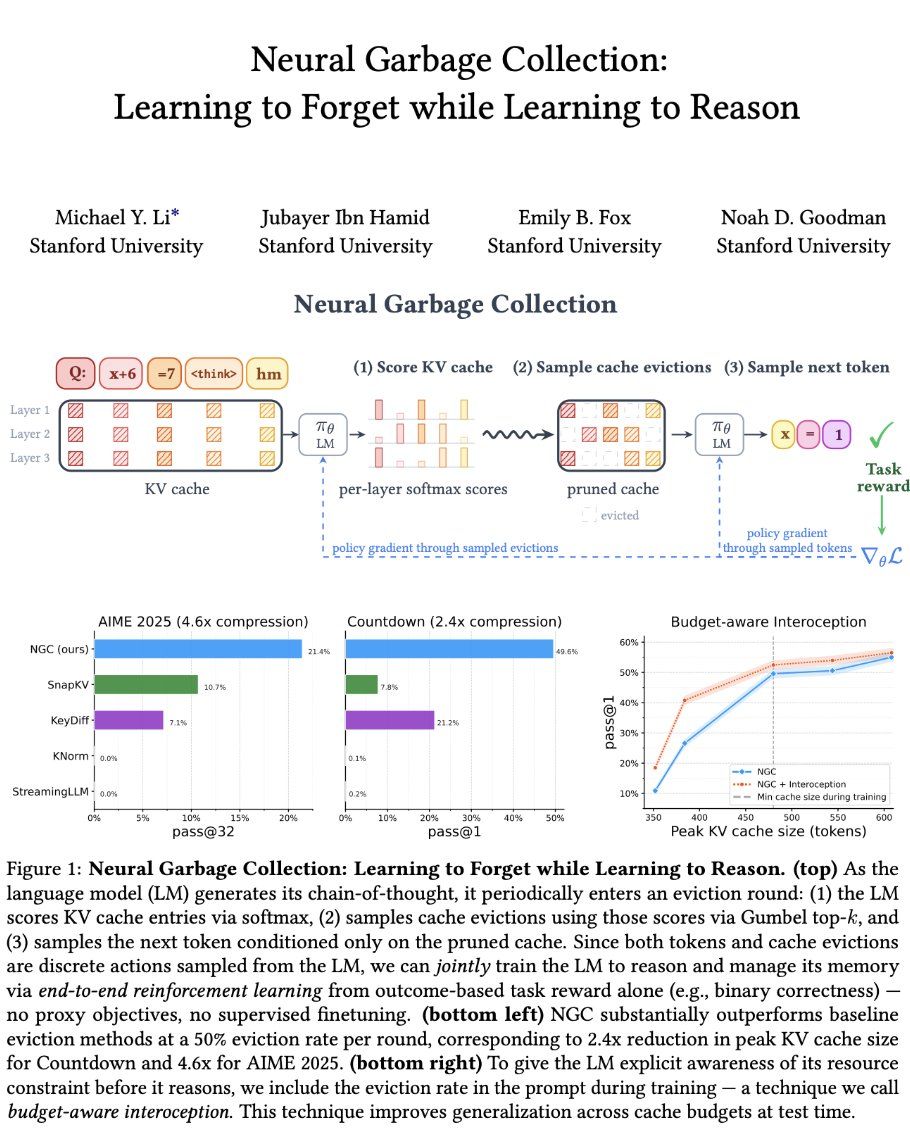

Stanford's Neural Garbage Collection trains a reasoning model to evict its own KV cache blocks using the same RL reward that teaches it to reason. No new modules ... it repurposes existing attention s…

Commercial photogrammetry charges thousands per seat. ODM does the same job from a Docker one-liner … drop JPEGs in a folder, run one command, get georeferenced orthophotos, classified point clouds, D…

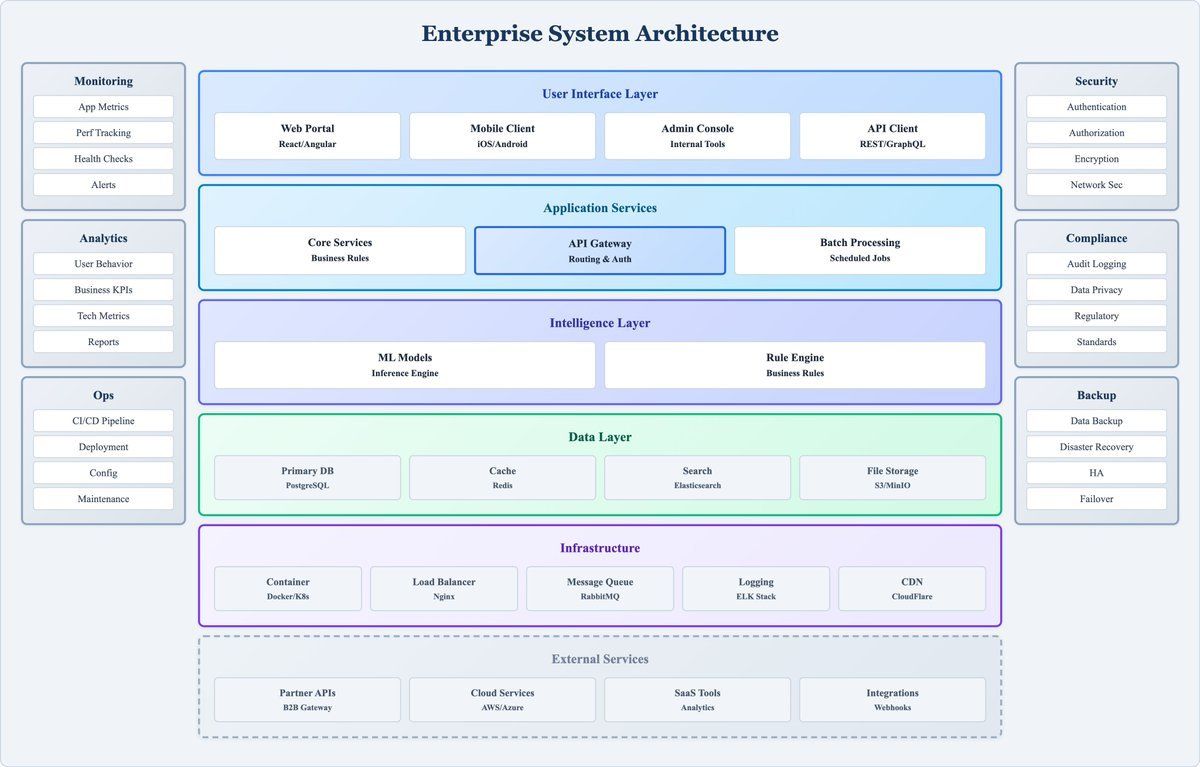

Fourteen skills that teach AI coding agents to generate diagrams in Markdown, organized by rendering engine. Nine PlantUML-based skills cover UML, cloud architecture, network topology, security, Archi…

55.8 posts/week

Posts / Week

0.1 days

Days Between Posts

1

Total Posts Analyzed

HIGH

Posting Frequency

218.6%

Avg Engagement Rate

STABLE

Performance Trend

110

Avg Length (Words)

HIGH

Depth Level

ADVANCED

Expertise Level

0.86/10

Uniqueness Score

NO

Question Usage

0%

Response Rate

Writing style breakdown

<start of post>

Your local inference server is leaking VRAM because it fails to deallocate inactive model shards after a context timeout. This week’s Stack Analysis breaks down how to implement a TTL-based eviction policy using a simple Python wrapper and the NVIDIA Management Library (NVML). Three scripts, zero dependencies outside of the driver, and a 40% reduction in 'Out of Memory' errors for multi-user environments.

The wrapper monitors process-specific memory usage every 500ms. When a process hits the 90% threshold, it triggers a SIGTERM to the oldest idle worker ... then re-initializes the shard only when a new request hits the queue. SQLite tracks the timestamps. It’s the kind of 'dumb' fix that saves you from buying a second A100 just to handle zombie processes.

automated shard eviction

NVML-based monitoring

500ms polling interval

SQLite state persistence

Works with vLLM, Ollama, and raw PyTorch deployments. MIT, 4.2K stars.

#VRAM #LLMOps #NVIDIA #vLLM #OpenSource #InferenceEfficiency

<end of post>

Sign in to unlock the full writing analysis

Nail your LinkedIn strategy with ViralBrain.

Analyze and write in André Lindenberg's style. Grow your LinkedIn to the next level.